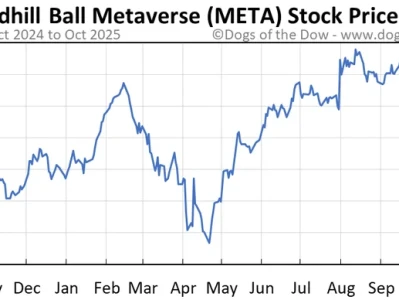

Alright, buckle up, everyone. Because something huge is brewing in the world of AI, and it's not just about Nvidia anymore. We're talking about Meta, Google, and a potential paradigm shift in how AI is powered. Meta Platforms (META), as you probably know, has been making some serious waves, and it's not just because of their metaverse ambitions. The real story? Their massive investment in AI.

But here's the thing: they might be about to shake up the entire chip landscape by cozying up with… Google? Yeah, that Google. The Information is reporting that Meta is in talks to spend billions on Google's Tensor Processing Units (TPUs) for their data centers by 2027, and potentially even rent them as early as next year. When I first read that, I had to rub my eyes. It’s like seeing Coke and Pepsi sharing a soda fountain.

This isn't just a business deal; it's a validation of Google's AI silicon. For years, Nvidia has been the undisputed king of the hill, the go-to for anyone needing serious computing power for AI. But Google's TPUs? They've been quietly building them, refining them, and now, they might just be ready to steal some of Nvidia's thunder. Think of it like the early days of personal computing. Intel dominated, then AMD came along and suddenly, there was a real choice. That's what Google is positioning itself to be.

The Big Picture

Now, why is this such a big deal? Well, for starters, it diversifies the supply chain. Relying solely on one company for critical components is always a risk. Remember the chip shortages of the past few years? Nightmares, right? Meta getting cozy with Google provides a crucial alternative, ensuring that the AI revolution doesn't get bottlenecked by a single point of failure.

But it's more than just risk mitigation. Google's TPUs are designed specifically for AI workloads. They're not general-purpose chips trying to be good at everything; they're laser-focused on AI, which means they can potentially offer better performance and efficiency for specific tasks. Jay Goldberg, an analyst at Seaport, called Google's earlier deal with Anthropic a "really powerful validation" for TPUs, and he’s spot on. It's like having a specialized tool for a specialized job – you wouldn't use a hammer to screw in a lightbulb, would you?

And the scale of this is mind-boggling. Bloomberg Intelligence estimates that Meta's capital expenditure for 2026 will be at least $100 billion, with $40-$50 billion earmarked for inferencing-chip capacity. That's a lot of chips, and Meta's willingness to spend that kind of money on Google's TPUs speaks volumes about their confidence in the technology. Nvidia-Google AI Chip Rivalry Escalates on Report of Meta Talks

What does this mean for Nvidia? Well, the stock (NVDA) took a bit of a hit on the news, which is understandable. Michael Burry, the investor who famously predicted the 2008 financial crisis, has even raised concerns about an AI bubble and Nvidia's accounting practices. But let’s be clear: Nvidia isn't going anywhere. They're still a powerhouse, and their GPUs are still essential for many AI applications. But the landscape is shifting. It’s becoming a multi-polar world, and that’s ultimately good for innovation.

Meta's potential move to Google TPUs isn't just about cost savings or supply chain diversification. It's a strategic bet on the future of AI, a recognition that specialized hardware is key to unlocking the next level of performance. And it’s not just Meta. Amazon (AMZN) and Microsoft (MSFT) are also developing their own custom silicon. This trend suggests a move away from a one-size-fits-all approach to AI hardware and towards a more tailored, optimized ecosystem.

But here's where it gets really interesting. This competition isn't just about who makes the fastest chip. It's about who can build the most complete AI platform, from hardware to software to cloud services. Google, with its vast cloud infrastructure and its cutting-edge AI research, is in a unique position to offer a compelling alternative to Nvidia's ecosystem. And Meta, by partnering with Google, is essentially betting that this alternative will be a winning one.

Now, I know what some of you might be thinking: "Is this just hype? Are we in another AI bubble?" And those are valid questions. But here's the thing: AI is already transforming our world, from healthcare to transportation to entertainment. It's not a question of if AI will change everything, but how. And the hardware that powers these AI systems is absolutely critical.

This is the kind of breakthrough that reminds me why I got into this field in the first place. This isn't just about faster chips or bigger numbers; it's about unlocking human potential. What happens when AI becomes even more accessible, more efficient, and more powerful? What new possibilities will emerge? What new problems will we solve?

This is where we need to be thoughtful. As AI becomes more integrated into our lives, we need to ensure that it's used responsibly and ethically. We need to address issues like bias, privacy, and job displacement. The future of AI is not just about technology; it's about humanity.

The Dawn of a New Era

So, what's the real takeaway here? I think it's this: the AI revolution is just getting started. Meta's potential partnership with Google is a sign of things to come, a glimpse into a future where AI hardware is more diverse, more specialized, and more accessible. And that future, my friends, is looking brighter than ever.